Reviewed this week

Leave No Context Behind: Efficient Infinite Context Transformers with Infini-attention

Dataset Reset Policy Optimization for RLHF

⭐Rho-1: Not All Tokens Are What You Need

⭐LLM2Vec: Large Language Models Are Secretly Powerful Text Encoders

⭐: Papers that I particularly recommend reading.

New code repositories:

I maintain a curated list of AI code repositories here:

Leave No Context Behind: Efficient Infinite Context Transformers with Infini-attention

LongRoPE claimed to enable the transformer for very large contexts of 2 million tokens.

This new work by Google goes further than LongRope and claims that their approach can handle context of any length.

In their research, the authors introduce a technique called Infini-attention that allows Transformer-based LLMs to handle indefinitely long inputs within a constrained memory and computational budget.

Infini-attention modifies the traditional attention mechanism by incorporating a compressive memory that saves old key and value states, rather than discarding them. This enables the retrieval of these states for use in processing subsequent sequences using the attention queries. The approach combines masked local attention and long-term linear attention within a single Transformer block.

Infini-attention’s main advantage is in its ability to blend long-term memory retrieval with local contextual processing. This modification allows standard LLMs to be extended to process infinite contexts effectively.

In their empirical evaluations, the authors demonstrate that their model with Infini-attention surpasses baseline models in long-context language modeling tasks, achieving a significantly higher comprehension ratio and lower perplexity on extended sequence lengths. A 1 billion parameter LLM equipped with Infini-attention effectively handles up to 1 million tokens and performs well on specific tasks like passkey retrieval. Moreover, an 8 billion parameter model achieves state-of-the-art results in summarizing lengthy texts after being continually pre-trained and fine-tuned with the new attention mechanism.

However, they didn’t release their code and didn’t compare their approach with the many recent works extending context length for transformer LLMs.

Dataset Reset Policy Optimization for RLHF

This work introduces a new algorithm called Dataset Reset Policy Optimization (DR-PO) for training instruct LLMs with RLHF.

It operates under the assumption that it's possible to reset to any state during policy optimization and data collection, rather than just to initial states. DR-PO leverages this ability to reset to improve data integration from offline datasets into the online RL process.

DR-PO simplifies implementation compared to other policy optimization algorithms and also has strong theoretical guarantees. It effectively learns policies that maximize rewards based on offline data.

Empirically, the authors of this work tested DR-PO on standard RLHF datasets such as TL;DR summarization and Anthropic HH. They found that DR-PO outperforms other well-known algorithms like PPO and DPO in generating summaries and does not overfit when policies trained on one dataset are applied to another in a zero-shot scenario. DR-PO also scales comparably to PPO while maintaining superior performance across various model scales.

They released their implementation here:

GitHub: Cornell-RL/drpo

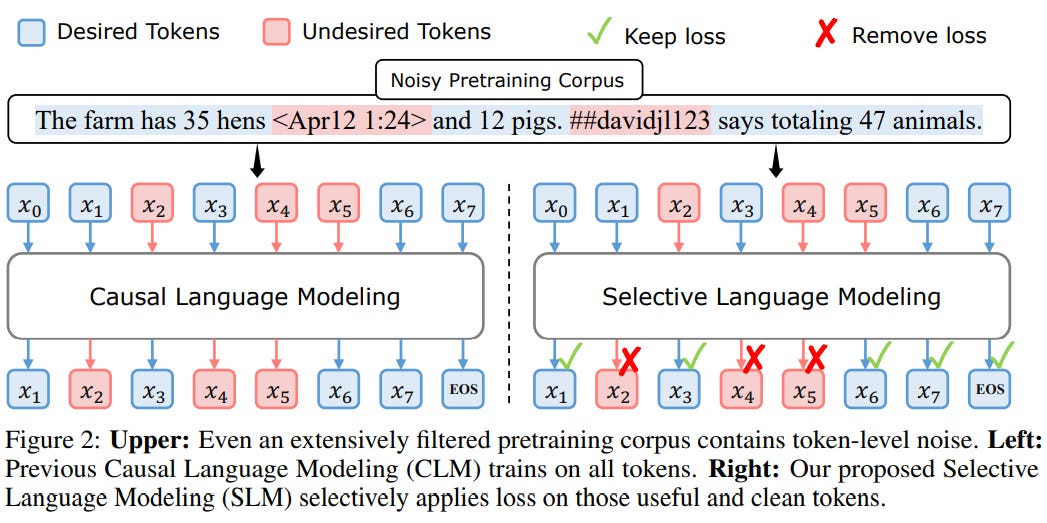

Rho-1: Not All Tokens Are What You Need

This research explores the training dynamics at the token level, focusing on how token-level loss changes during standard pre-training. In their initial analysis, the authors assessed the model’s token perplexity at various stages and classified tokens into different categories.

They discovered that significant reductions in loss were mostly confined to a specific group of tokens. Many tokens were identified as "easy tokens," which the model learned quickly, while "hard tokens" showed fluctuating losses and were resistant to convergence, often resulting in numerous ineffective gradient updates.

The researchers introduced the RHO-1 models to address these findings, employing a new Selective Language Modeling (SLM) objective. This technique involves processing the full sequence in the model but selectively omitting the loss from undesirable tokens.

The process starts with training a reference model on high-quality corpora to establish metrics that score tokens based on their utility and filter out irrelevant tokens. The reference model then evaluates each token in a corpus based on its loss. The training then focuses on those tokens that show a significant loss compared to the reference model, selectively improving the tokens that are most beneficial for downstream applications.

In their experiments, they demonstrated that SLM significantly increases token efficiency during pretraining and enhances performance in downstream tasks. The technique effectively pinpoints tokens that are relevant to the target distribution, improving perplexity scores on benchmark tests for models trained with these selected tokens.

The authors released their code here:

GitHub: microsoft/rho

LLM2Vec: Large Language Models Are Secretly Powerful Text Encoders

This work proposed LLM2Vec, a method designed to convert any pre-trained LLM into a universal text encoder.

LLM2VEC works in 3 steps:

Enabling bidirectional attention

Predicting the next token in a masked fashion (similar to BERT)

Employing unsupervised contrastive learning.

LLM2Vec doesn’t need labeled datasets.

In the paper, the technique was applied to three different decoder-only LLMs, varying in size from 1.3 billion to 7 billion parameters, including models such as S-LLaMA-1.3B, Llama 2 7B, and Mistral 7B.

The performance of these LLM2Vec models was assessed across various tasks at both word and sequence levels. In tasks like chunking, named-entity recognition, and part-of-speech tagging, models transformed by LLM2Vec significantly outperformed leading encoder-only models, indicating its potential to generate nuanced contextualized token representations.

Moreover, on the Massive Text Embeddings Benchmark (MTEB), models adapted with LLM2Vec achieved good results for unsupervised models.

This seems to be a technique very suitable for RAG. Instead of searching for a third-party embedding model, converting the LLM into a text embedding model might be enough for a good retrieval.

They released their code here:

GitHub: McGill-NLP/llm2vec

If you have any questions about one of these papers, write them in the comments. I will answer them.