Teaching Reasoning Models When to Stop: Data, Rewards, and Self-Aware Decoding

The Weekly Salt #109

This week, we review:

⭐The Art of Efficient Reasoning: Data, Reward, and Optimization

Does Your Reasoning Model Implicitly Know When to Stop Thinking?

⭐The Art of Efficient Reasoning: Data, Reward, and Optimization

This work dissects what actually happens when you use RL to make chain-of-thought reasoning shorter without trashing accuracy.

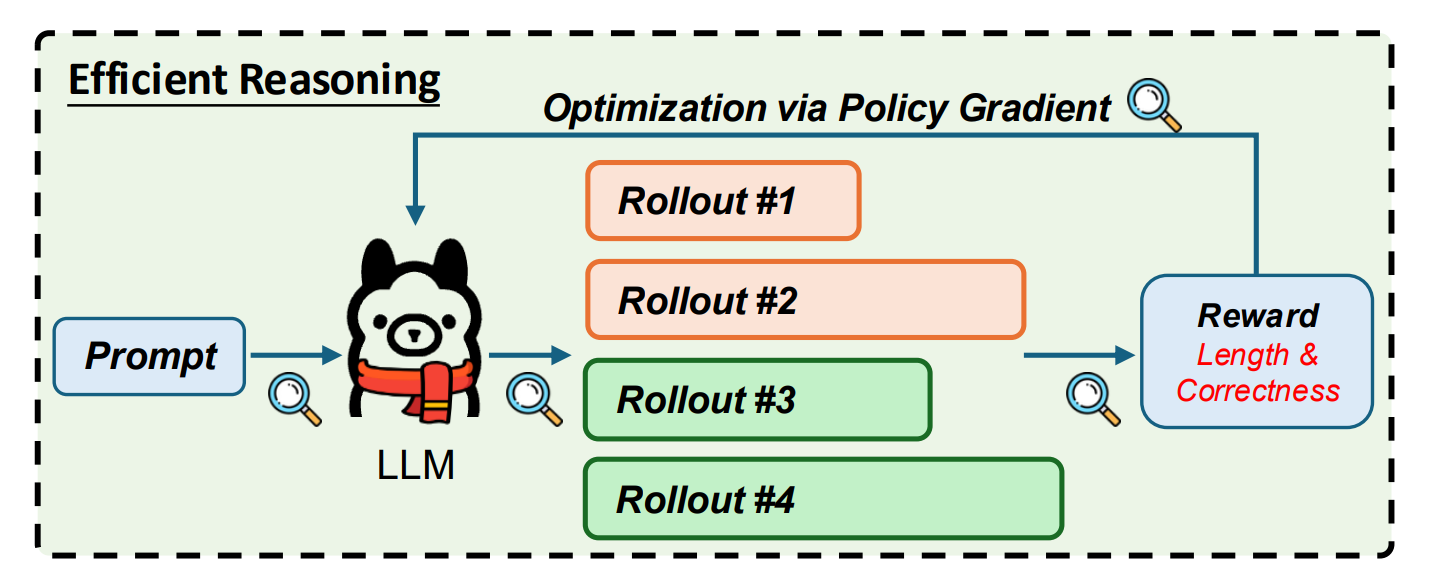

The authors focus on a simple but widely used recipe: GRPO on a DeepSeek-R1–distilled Qwen backbone, trained on DeepScaleR math prompts with a truncation-style reward that only pays out for correct answers under a target length.

They argue that efficient reasoning training reliably follows two phases: first, a “length adaptation” phase where the model rapidly compresses its rollouts to meet the token budget, and second, a slower “reasoning refinement” phase where accuracy is recovered within that shorter-length regime. To make this visible, they track length distributions conditioned on correctness and sweep performance across inference budgets from 2k to 32k tokens on math and code benchmarks like AIME’25, MATH-500, and LiveCodeBench.

The most consequential finding is about data difficulty. If you train under a length-aware reward only on very hard math problems, the policy can collapse: entropy spikes, rollouts shrink aggressively, and downstream metrics drop, because the signal is dominated by length penalties on mostly incorrect trajectories. In contrast, training on an “easy” subset yields stable entropy, smooth convergence to the target length, and essentially no loss, sometimes even a modest gain, on tough benchmarks such as AIME25. In other words, dense positive reward is more important than matching the difficulty of your target eval set, and the induced “short-answer” bias even transfers reasonably from math to code.

They then poke at the rest of the recipe: rollouts, reward shaping on negatives, and off-policy tricks. Increasing the number of rollouts per prompt noticeably accelerates length adaptation and improves asymptotic math performance, although gains on LiveCodeBench are minor and compute cost climbs quickly. Masking or down-weighting different subsets of negative rollouts leads to distinct failure modes, including ultra-short but low-quality outputs, so you cannot just zero out half the trajectories and hope for the best. Off-policy training with moderately stale rollouts can match or slightly beat on-policy early in training, but high staleness produces entropy blow-ups and length rebound, so the recommendation is essentially “on-policy, many rollouts, easy prompts.”

Applying these guidelines to multiple Qwen3 models from 0.6B to 30B roughly halves average response length on AIME25 while preserving, or for smaller models, clearly improving, Mean@8 and Pass@8.

Does Your Reasoning Model Implicitly Know When to Stop Thinking?

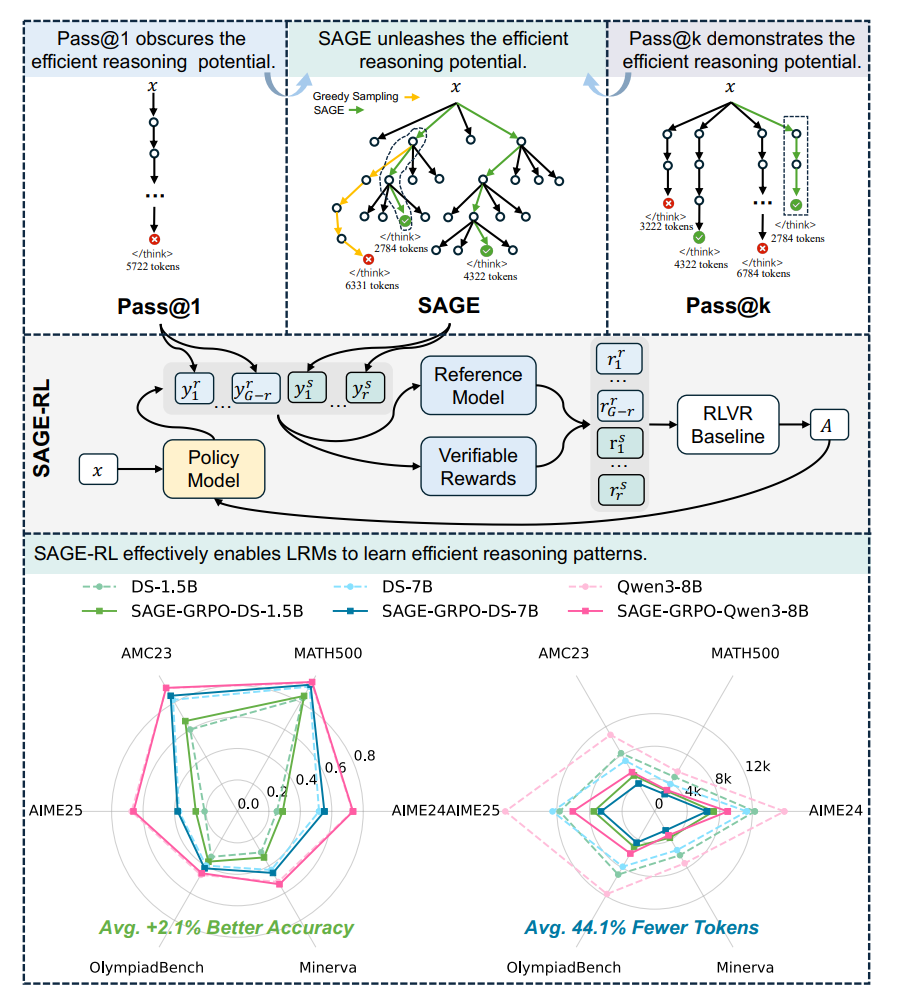

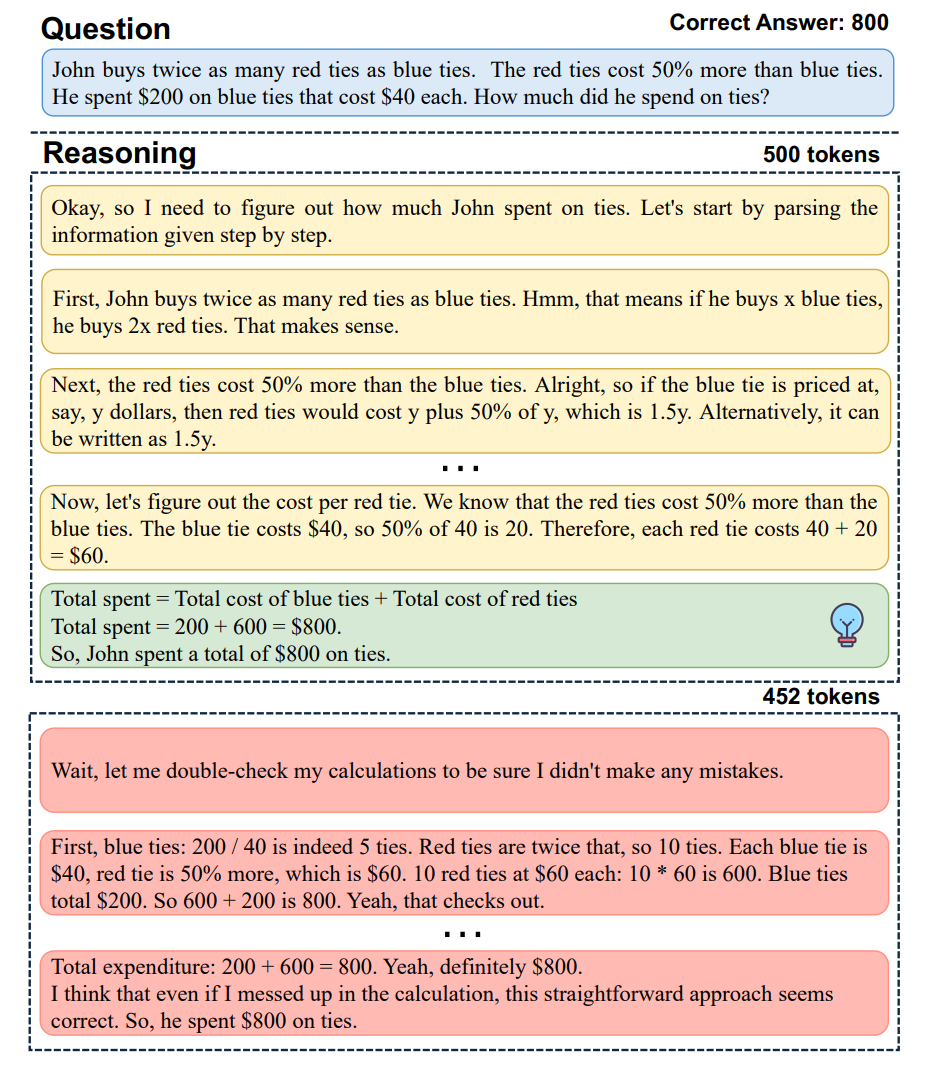

The paper starts from the now-familiar observation that RLVR-style training encourages very long chains of thought that are only loosely correlated with correctness. On math benchmarks, models often arrive at the right answer early and then keep talking: the Ratio of First Correct Step metric shows that, in a majority of correct solutions, the answer appears well before the final step, and additional post-training does little to fix this under standard pass@1 sampling.

To probe whether the model “knows” when it should have stopped, the authors introduce TSearch, a beam-style exploration over partial reasoning traces scored by average prefix log-likelihood, with a special focus on the probability assigned to a terminator token. As the exploration width increases, TSearch with this prefix score finds shorter reasoning paths and higher accuracy, whereas a variant that only looks at next-token scores collapses to short but bad outputs, evidence that concise, high-confidence solutions exist in the model but are invisible to greedy decoding.

For deployment, TSearch is turned into SAGE (Self-Aware Guided Efficient Reasoning), which operates at the level of reasoning “steps” (segments of CoT) instead of individual tokens. At each step it samples multiple candidate steps from the model, extends the current beams, scores the resulting prefixes by average log-likelihood, and accepts a completion as soon as a sampled step ends with, effectively doing step-wise beam search guided by the model’s own stopping confidence.

A degraded version that removes this structured exploration underperforms SAGE in both pass@1 and length, showing that the benefit is not just early truncation. Across several math benchmarks and three LRMs, SAGE improves pass@1 while roughly halving token usage compared to standard random sampling, with stronger models on harder datasets seeing larger accuracy gains and weaker models on easier datasets seeing larger compression.

The same idea is then folded into training: SAGE-RL simply replaces two of the eight rollouts per question in GRPO/GSPO-style RLVR with SAGE samples, which consistently yields higher pass@1 (about two points on average) and substantially better token efficiency than both plain RLVR and prior efficiency-oriented baselines.

In my view, the most interesting claim is not that yet another sampling heuristic works, but that the model’s log-probability landscape already encodes a fairly sharp notion of “I’m done” around the token, and that beam-style exploration plus a simple prefix score is enough to expose it. That said, the evidence is firmly grounded in math problems with verifiable rewards, explicit step boundaries, and a designated terminator; how much of this carries over to fuzzier domains like tool-use planning or open-ended writing is an open question.